Computer programs can now create never-before-seen images in seconds.

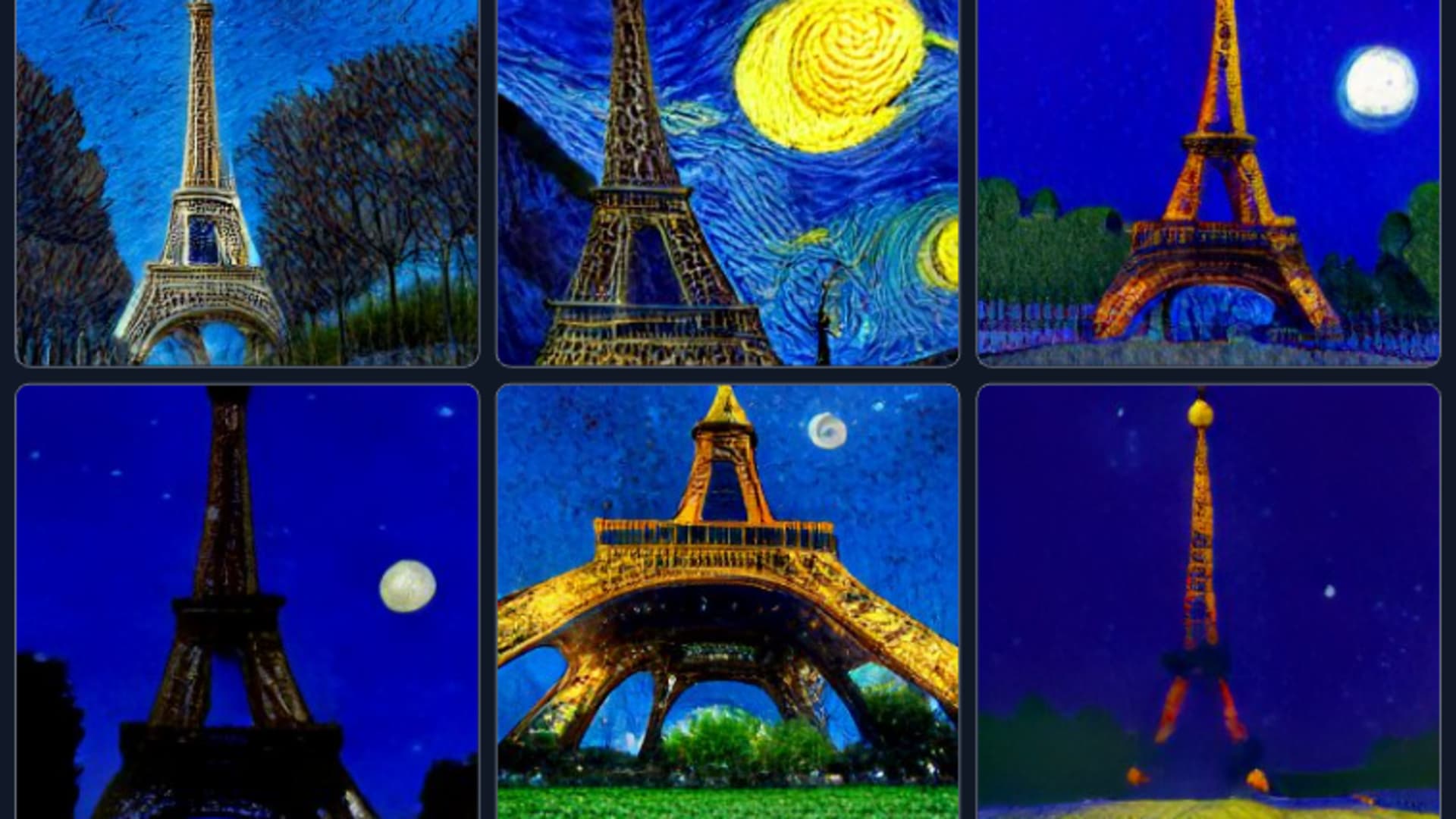

Feed one of these programs some words, and it will usually spit out a picture that actually matches the description, no matter how bizarre.

The pictures aren’t perfect. They often feature hands with extra fingers or digits that bend and curve unnaturally. Image generators have issues with text, coming up with nonsensical signs or making up their own alphabet.

But these image-generating programs — which look like toys today — could be the start of a big wave in technology. Technologists call them generative models, or generative AI.

“In the last three months, the words ‘generative AI’ went from, ‘no one even discussed this’ to the buzzword du jour,” said David Beisel, a venture capitalist at NextView Ventures.

In the past year, generative AI has gotten so much better that it’s inspired people to leave their jobs, start new companies and dream about a future where artificial intelligence could power a new generation of tech giants.

The field of artificial intelligence has been having a boom phase for the past half-decade or so, but most of those advancements have been related to making sense of existing data. AI models have quickly grown efficient enough to recognize whether there’s a cat in a photo you just took on your phone and reliable enough to power results from a Google search engine billions of times per day.

But generative AI models can produce something entirely new that wasn’t there before — in other words, they’re creating, not just analyzing.

“The impressive part, even for me, is that it’s able to compose new stuff,” said Boris Dayma, creator of the Craiyon generative AI. “It’s not just creating old images, it’s new things that can be completely different to what it’s seen before.”

Sequoia Capital — historically the most successful venture capital firm in the history of the industry, with early bets on companies like Apple and Google — says in a blog post on its website that “Generative AI has the potential to generate trillions of dollars of economic value.” The VC firm predicts that generative AI could change every industry that requires humans to create original work, from gaming to advertising to law.

In a twist, Sequoia also notes in the post that the message was partially written by GPT-3, a generative AI that produces text.

How generative AI works

Image generation uses techniques from a subset of machine learning called deep learning, which has driven most of the advancements in the field of artificial intelligence since a landmark 2012 paper about image classification ignited renewed interest in the technology.

Deep learning uses models trained on large sets of data until the program understands relationships in that data. Then the model can be used for applications, like identifying if a picture has a dog in it, or translating text.

Image generators work by turning this process on its head. Instead of translating from English to French, for example, they translate an English phrase into an image. They usually have two main parts, one that processes the initial phrase, and the second that turns that data into an image.

The first wave of generative AIs was based on an approach called GAN, which stands for generative adversarial networks. GANs were famously used in a tool that generates photos of people who don’t exist. Essentially, they work by having two AI models compete against each other to better create an image that fits with a goal.

Newer approaches generally use transformers, which were first described in a 2017 Google paper. It’s an emerging technique that can take advantage of bigger datasets that can cost millions of dollars to train.

The first image generator to gain a lot of attention was DALL-E, a program announced in 2021 by OpenAI, a well-funded startup in Silicon Valley. OpenAI released a more powerful version this year.

“With DALL-E 2, that’s really the moment when when sort of we crossed the uncanny valley,” said Christian Cantrell, a developer focusing on generative AI.

Another commonly used AI-based image generator is Craiyon, formerly known as Dall-E Mini, which is available on the web. Users can type in a phrase and see it illustrated in minutes in their browser.

Since launching in July 2021, it’s now generating about 10 million images a day, adding up to 1 billion images that have never existed before, according to Dayma. He’s made Craiyon his full-time job after usage skyrocketed earlier this year. He says he’s focused on using advertising to keep the website free to users because the site’s server costs are high.

A Twitter account dedicated to the weirdest and most creative images on Craiyon has over 1 million followers, and regularly serves up images of increasingly improbable or absurd scenes. For example: An Italian sink with a tap that dispenses marinara sauce or Minions fighting in the Vietnam War.

But the program that has inspired the most tinkering is Stable Diffusion, which was released to the public in August. The code for it is available on GitHub and can be run on computers, not just in the cloud or through a programming interface. That has inspired users to tweak the program’s code for their own purposes, or build on top of it.

For example, Stable Diffusion was integrated into Adobe Photoshop through a plug-in, allowing users to generate backgrounds and other parts of images that they can then directly manipulate inside the application using layers and other Photoshop tools, turning generative AI from something that produces finished images into a tool that can be used by professionals.

“I wanted to meet creative professionals where they were and I wanted to empower them to bring AI into their workflows, not blow up their workflows,” said Cantrell, developer of the plug-in.

Cantrell, who was a 20-year Adobe veteran before leaving his job this year to focus on generative AI, says the plug-in has been downloaded tens of thousands of times. Artists tell him they use it in myriad ways that he couldn’t have anticipated, such as animating Godzilla or creating pictures of Spider-Man in any pose the artist could imagine.

“Usually, you start from inspiration, right? You’re looking at mood boards, those kinds of things,” Cantrell said. “So my initial plan with the first version, let’s get past the blank canvas problem, you type in what you’re thinking, just describe what you’re thinking and then I’ll show you some stuff, right?”

An emerging art to working with generative AIs is how to frame the “prompt,” or string of words that lead to the image. A search engine called Lexica catalogs Stable Diffusion images and the exact string of words that can be used to generate them.

Guides have popped up on Reddit and Discord describing tricks that people have discovered to dial in the kind of picture they want.

Startups, cloud providers, and chip makers could thrive

Some investors are looking at generative AI as a potentially transformative platform shift, like the smartphone or the early days of the web. These kinds of shifts greatly expand the total addressable market of people who might be able to use the technology, moving from a few dedicated nerds to business professionals — and eventually everyone else.

“It’s not as though AI hadn’t been around before this — and it wasn’t like we hadn’t had mobile before 2007,” said Beisel, the seed investor. “But it’s like this moment where it just kind of all comes together. That real people, like end-user consumers, can experiment and see something that’s different than it was before.”

Cantrell sees generative machine learning as akin to an even more foundational technology: the database. Originally pioneered by companies like Oracle in the 1970s as a way to store and organize discrete bits of information in clearly delineated rows and columns — think of an enormous Excel spreadsheet, databases have been re-envisioned to store every type of data for every conceivable type of computing application from the web to mobile.

“Machine learning is kind of like databases, where databases were a huge unlock for web apps. Almost every app you or I have ever used in our lives is on top of a database,” Cantrell said. “Nobody cares how the database works, they just know how to use it.”

Michael Dempsey, managing partner at Compound VC, says moments where technologies previously limited to labs break into the mainstream are “very rare” and attract a lot of attention from venture investors, who like to make bets on fields that could be huge. Still, he warns that this moment in generative AI might end up being a “curiosity phase” closer to the peak of a hype cycle. And companies founded during this era could fail because they don’t focus on specific uses that businesses or consumers would pay for.

Others in the field believe that startups pioneering these technologies today could eventually challenge the software giants that currently dominate the artificial intelligence space, including Google, Facebook parent Meta and Microsoft, paving the way for the next generation of tech giants.

“There’s going to be a bunch of trillion-dollar companies — a whole generation of startups who are going to build on this new way of doing technologies,” said Clement Delangue, the CEO of Hugging Face, a developer platform like GitHub that hosts pre-trained models, including those for Craiyon and Stable Diffusion. Its goal is to make AI technology easier for programmers to build on.

Some of these firms are already sporting significant investment.

Hugging Face was valued at $2 billion after raising money earlier this year from investors including Lux Capital and Sequoia; and OpenAI, the most prominent startup in the field, has received over $1 billion in funding from Microsoft and Khosla Ventures.

Meanwhile, Stability AI, the maker of Stable Diffusion, is in talks to raise venture funding at a valuation of as much as $1 billion, according to Forbes. A representative for Stability AI declined to comment.

Cloud providers like Amazon, Microsoft and Google could also benefit because generative AI can be very computationally intensive.

Meta and Google have hired some of the most prominent talent in the field in hopes that advances might be able to be integrated into company products. In September, Meta announced an AI program called “Make-A-Video” that takes the technology one step farther by generating videos, not just images.

“This is pretty amazing progress,” Meta CEO Mark Zuckerberg said in a post on his Facebook page. “It’s much harder to generate video than photos because beyond correctly generating each pixel, the system also has to predict how they’ll change over time.”

On Wednesday, Google matched Meta and announced and released code for a program called Phenaki that also does text to video, and can generate minutes of footage.

The boom could also bolster chipmakers like Nvidia, AMD and Intel, which make the kind of advanced graphics processors that are ideal for training and deploying AI models.

At a conference last week, Nvidia CEO Jensen Huang highlighted generative AI as a key use for the company’s newest chips, saying these kind of programs could soon “revolutionize communications.”

Profitable end uses for Generative AI are currently rare. A lot of today’s excitement revolves around free or low-cost experimentation. For example, some writers have been experimented with using image generators to make images for articles.

One example of Nvidia’s work is the use of a model to generate new 3D images of people, animals, vehicles or furniture that can populate a virtual game world.

Ethical issues

Ultimately, everyone developing generative AI will have to grapple with some of the ethical issues that come up from image generators.

First, there’s the jobs question. Even though many programs require a powerful graphics processor, computer-generated content is still going to be far less expensive than the work of a professional illustrator, which can cost hundreds of dollars per hour.

That could spell trouble for artists, video producers and other people whose job it is to generate creative work. For example, a person whose job is choosing images for a pitch deck or creating marketing materials could be replaced by a computer program very shortly.

“It turns out, machine-learning models are probably going to start being orders of magnitude better and faster and cheaper than that person,” said Compound VC’s Dempsey.

There are also complicated questions around originality and ownership.

Generative AIs are trained on huge amounts of images, and it’s still being debated in the field and in courts whether the creators of the original images have any copyright claims on images generated to be in the original creator’s style.

One artist won an art competition in Colorado using an image largely created by a generative AI called MidJourney, although he said in interviews after he won that he processed the image after choosing it from one of hundreds he generated and then tweaking it in Photoshop.

Some images generated by Stable Diffusion seem to have watermarks, suggesting that a part of the original datasets were copyrighted. Some prompt guides recommend using specific living artists’ names in prompts in order to get better results that mimic the style of that artist.

Last month, Getty Images banned users from uploading generative AI images into its stock image database, because it was concerned about legal challenges around copyright.

Image generators can also be used to create new images of trademarked characters or objects, such as the Minions, Marvel characters or the throne from Game of Thrones.

As image-generating software gets better, it also has the potential to be able to fool users into believing false information or to display images or videos of events that never happened.

Developers also have to grapple with the possibility that models trained on large amounts of data may have biases related to gender, race or culture included in the data, which can lead to the model displaying that bias in its output. For its part, Hugging Face, the model-sharing website, publishes materials such as an ethics newsletter and holds talks about responsible development in the AI field.

“What we’re seeing with these models is one of the short-term and existing challenges is that because they’re probabilistic models, trained on large datasets, they tend to encode a lot of biases,” Delangue said, offering an example of a generative AI drawing a picture of a “software engineer” as a white man.